You recently wrote a peer-reviewed paper on Fathom’s flood mapping technology. What inspired the need to publish this paper in a peer-reviewed journal?

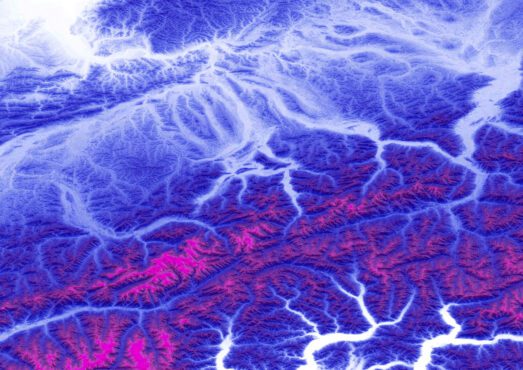

Advances in computational capacity, the availability of relevant data and the algorithms with which we handle these mean large-scale flood models have come to the fore in recent years. A number of commercial and academic flood models have been developed over the past decade, but none have undergone any comprehensive testing to see if they provide any useful information (what we call ‘validation’). This paper is the first of its kind to do just that.

Why validate your model?

It’s all very well plugging data into a flood model and producing some output delineating a flood extent, but we need to know that what the model produces is valid to have any faith in its applications. Fathom is a company borne of world-leading science, so we commit to our products undergoing rigorous testing.

How has Fathom produced such an effective model?

We ‘stand on the shoulders of giants’, is the quote by Isaac Newton when describing how intellectual progress is made. Fathom’s methods were posited in the Hydrology Research Group at the University of Bristol decades ago, and have been refined and improved ever since. Our chairman Prof. Paul Bates wrote a seminal paper in 2000, which forms the foundation of our methodology, and improvements to this core concept have been made by dozens of talented hydrologists. Essentially, it’s taken a lot of scientific elbow grease!

How was Fathom and its method formed?

The methods used by Fathom were produced in the Hydrology Research Group at the University of Bristol, and its members founded the company. Everyone in the company is or was part of the Hydrology Research Group.

What does the paper mean for your industry?

The paper shows that there is skill to large-scale hydraulic modelling, so it is a vindication of the methodology we have pioneered. It’s the first firm indication we’ve had that we’re on the right track. This means our clients can have confidence in the data we provide them with and, more generally, other flood modellers can see the benefit of this approach. It’s no mean feat to get a paper published: you send it off for peer review – where scientists from across the globe scrutinise your work – and make changes in light of the feedback given. Importantly, the basis of our methodology was accepted.

How is this likely to change the way flood mapping works?

That sort of testing has never happened to a model of this scale. Back when Paul wrote his paper in 2000, hydrologists traditionally would model a small river reach, and use data on a historical flood to validate that model. We have managed to validate a model that simulates flooding on every river in the USA – and demonstrated its value. In showing that this is a worthwhile field of enquiry, we have heralded a new era in hydraulic modelling.

Our aim next year, is to turn our world leading global flood hazard maps into a stochastic model framework that can be applied anywhere in the world. These kinds of tools and data are going to be critically important if we are going to mitigate against the seemingly increasing intensity of climate driven natural hazards. Ultimately, we want to bridge the gap between academia and decision makers, enabling them to have access to cutting edge tools as soon as they are created.