As part of Fathom’s ‘Meet the Team’ series, we talk to Niall Quinn.

Tell us about your role at Fathom, and a bit about your career to date?

My research career began with my PhD in coastal oceanography at Southampton University. This work focused on characterizing the role of tide-surge-wave interactions on modelled storm tide water levels, and assessed the suitability of using model emulators for real time forecasting. Following this, I moved to Bristol University to work with Paul Bates and Jeff Neal on a variety of flood risk related studies. After ~5 years of post-doctoral research I moved to Fathom and have been there ever since. As a developer at Fathom, I am involved in a variety of interesting projects related to flood risk. The key projects I have been involved in have been the development of the US catastrophe model (Quinn et al., 2019) and the new current and future US hazard layers as part of a large not-for-profit international group aiming to provide US homeowners with free flood risk information.

What led you to join Fathom?

Fathom was a natural progression both from a research and a social perspective. I had already spent ~5 years working with the Bristol hydrology team on flood risk research and the move to Fathom allowed me to continue working in this field. Also, at the time, Fathom had limited expertise in coastal modelling so I felt I could bring something new to the team. The fact that the company founders were also already good friends of mine, and the company was based in Bristol (where I and my family were settled) made this an even more appealing proposition. I had been interested in hearing all about Fathom from the guys since its inception, so when the chance came to get involved and help it grow, there was little hesitation.

What makes Fathom stand out from other companies who offer flood data?

Perhaps the key differentiator is the company ethos to be as transparent as possible. The groundwork for our models is decades of flood risk research from Bristol University (and collaborators) and we ensure that all subsequent developments are reported in open, peer-reviewed journal publications. This is important as not only does it ensure that what we do must pass a high level of expert critique, but it also means that a client can understand what it is we are providing them, not only the strengths but also the weaknesses. In my experience, this honesty about our products has been something that clients have been most excited about.

What does the next 12 months look like for Fathom?

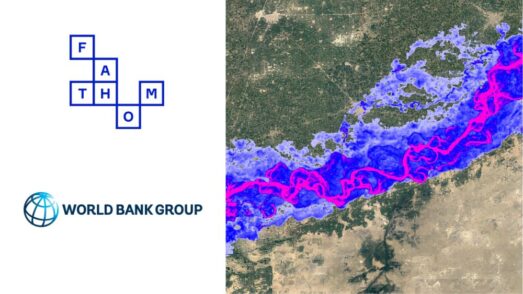

Exciting, I think! We are looking to develop in many different ways in the next year. For instance, we have a number of bids currently in review for some exciting projects; we will be developing our modelling approaches (e.g. I will be working on creating a truly multivariate stochastic US event set) and expanding our reach (e.g. we are developing pluvial, fluvial, extreme sea level hazard and catastrophe models for more regions including Japan, Australia and UK); and we will be expanding the First Street project to include further territories before then producing updated risk estimates based on the latest climate projections. We will also continue with our academic research collaborations through supervision of PhD and MSc students and the production of academic journal publications. No doubt there are even more projects lined up I am unaware of, so we are going to be busy!

What challenges is your industry likely to face over the next 12 months?

In the coming year I will be mainly focusing on the development of synthetic event catalogues for the US and other regions, as well as expanding / improving our representation of coastal extreme water levels. Key challenges facing these tasks is the creation of high quality / resolution datasets across large (e.g. continental or even global scales). For instance, few large scale reanalysis of tide-surge-wave induced coastal water levels (particularly in gauge poor regions) currently exist and obtaining the data required (e.g. sub-daily timeseries over many decades, across many locations) can be tricky. This is compounded by the fact that historically reanalysis only answers part of the problem, we actually desire the same models to be used in conjunction with large synthetic event sets of low frequency events (e.g. hurricanes) for both current and future climate scenarios; a huge computational undertaking. Fathom will need to either develop their own modelling frameworks or collaborate with those that have already done so. Models looking to provide these types of outputs within the public space are in development (e.g. see Muis et al., 2020), however, they are still in their infancy.