Our newly appointed Chief Research Officer, Dr Oliver Wing, summarises the recent contributions of Fathom scientists to academic literature.

2019 was a busy and exciting year for Fathom – and we bore the fruits of our labours through the publication of multiple peer-reviewed papers in the world’s most prestigious academic journals.

Our CRO, Ollie, discusses these below, including the paper which led to Fathom winning the inaugural London Catastrophe Modelling Awards 2020 (category: best piece of research / evaluation / validation in the last year).

Fathom prides itself on being at the forefront of scientific research, and subjection of our methods to peer-review by internationally recognised experts is central to our ethos as a company. Our publication record in 2019 stands as testament to this.

We love to forge research partnerships to enhance understanding in the field. This year, we worked collaboratively on research with: University of Bristol, The Nature Conservancy, University of California Davis, Iowa Flood Center, University of Bologna, and Wharton Risk Center. If you’d like to work collaboratively with Fathom, contact us.

Forgive us for the long article, but there’s a lot to cover:

- Estimating flood exposure globally

- Forecasting the inundation from incoming storms

- Quantifying the economic benefits of floodplain conservation

- Identifying flood defences remotely

- Examining the quality of depth-damage functions

Here’s hoping the next 12 months will be just as productive!

Estimating flood exposure globally

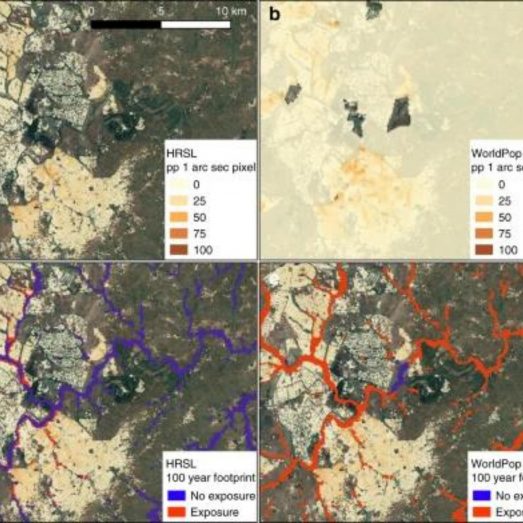

Last April, Fathom COO Dr Andy Smith led research published in Nature Communications, examining the effect of new population datasets on calculations of global flood exposure. Previous estimates of how many people reside in areas prone to flooding, particularly in data-poor developing countries, were informed by coarse-resolution population density maps. These maps are typically generated through spreading census counts across relatively large administrative regions. Instead, Andy’s paper employed population maps which distributed census information amongst buildings identified using submetre-scale satellite imagery. The difference in exposure calculations between commonly used coarser data and new high-resolution maps was stark. Andy found flood exposure in 18 developing countries to be significantly lower with more accurate population data. In particular, exposure is much more concentrated spatially and rural populations are substantially less at-risk than previously thought.

Read the paper responsible for our recognition at the LCMA 2020 below.

Smith, A., Bates, P., Wing, O., Sampson, C., Quinn, N., & Neal, J. (2019), New estimates of flood exposure in developing countries using high-resolution population data. Nature Communications, 10, 1814.

Forecasting the inundation from incoming storms

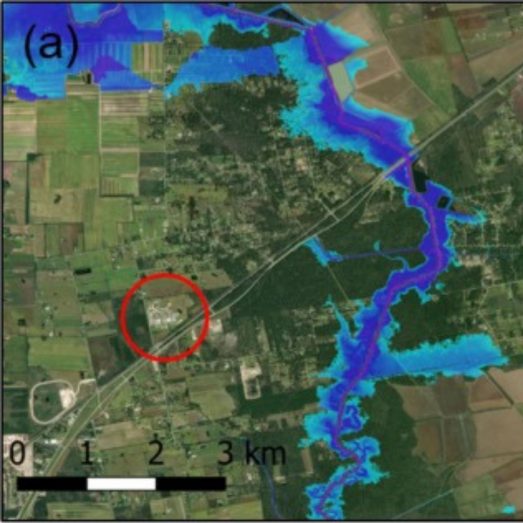

In July, Ollie led the Fathom research team in the publication of our rapid event response methodology in Journal of Hydrology X (JoH’s open-access mirror journal). Official forecasts often “stop” at river levels or flows, giving little indication of the spatial impact of the resultant flooding. Exemplified for Hurricane Harvey using meteorological and hydrological inputs from NOAA, Ollie validated hydraulic simulations against benchmarks gathered by the USGS & FEMA: both the forecast model and the ‘hindcast’, updated with observed boundary conditions. We found the model, which can be rapidly deployed over any region in the US, had skill in the delineation of inundated areas and that errors in simulated water levels were largely dependent upon the quality of the elevation data.

Wing, O., Sampson, C., Bates, P., Quinn, N., Smith, A., & Neal, J. (2019), A flood inundation forecast of Hurricane Harvey using a continental-scale 2D hydrodynamic model. Journal of Hydrology X, 4, 100039.

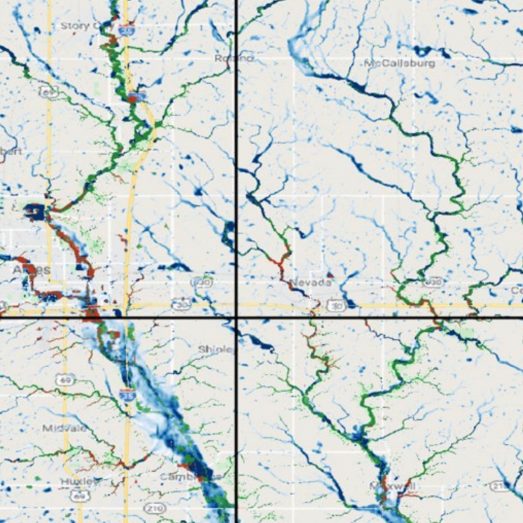

Quantifying the economic benefits of floodplain conservation

In December, Ollie and our close colleague Dr Kris Johnson from The Nature Conservancy published work in Nature Sustainability which computed the cost-effectiveness of buying-up US floodplain lands to prevent risky developments from occurring. The calculation is conceptually simple: which is larger, the cost of acquiring these lands at market value or footing the bill for the inevitable flood damages to structures projected to be built there? Using Fathom’s US flood model, we found that, across the nation, benefits (mitigated future damages) exceed costs (land purchase price). The granular nature of the model means that areas can be targeted where acquisition is an incredibly cost-effective strategy: tracts of land summing to 2x the area of Massachusetts return $5 in savings for every $1 spent on conservation. Since this analysis does not account for the multiple ecosystem and recreational benefits of floodplains – only direct structural damages – we expect the true benefits of conservation to be much greater.

You can read the paper here. [NOTE: this is unfortunately not open-access, please contact us for a pre-print copy]

Johnson, K., Wing, O., Bates, P., Fargione, J., Kroeger, T., Larson, W., Sampson, C., & Smith, A. (2019), A benefit–cost analysis of floodplain land acquisition for US flood damage reduction. Nature Sustainability, 3, 56-62.

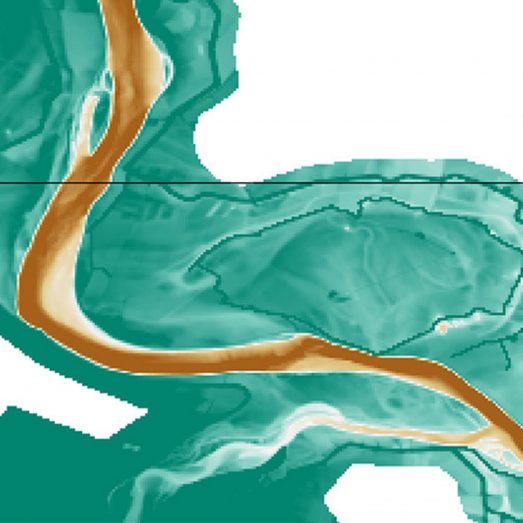

Identifying flood defences remotely

December 2019 was a productive month. We published again, this time in Water Resources Research, detailing a new approach to detecting levees and flood walls from elevation data. This was an enormous piece of work, completed in collaboration with the University of Bologna and the Iowa Flood Center. Ollie devised an efficient probabilistic algorithm for sampling “levee-like features” from high-resolution (3-10 metre) terrain data, training the algorithm against the US Army Corps’ National Levee Database. This output informed a new way of coarsening the hydraulic model grid scale (necessary for computational reasons): pixels we suspect of being levees (or, indeed, being of hydraulic significance) have their elevations preserved, while for other pixels we take some central tendency of elevation when we aggregate. Errors in levee crest elevation were reduced from over 1m to 0.05m on average, and we saw improvement in inundation model skill in urban areas. This work addresses a significant gap in our ability to obtain accurate flood estimates, where local protection information can be retained whilst simulating at large spatial scales.

Wing, O., Bates, P., Neal, J., Sampson, C., Smith, A., Quinn, N., Shustikova, I., Domeneghetti, A., Gilles, D., Goska, R., & Krajewski, W. (2019), A new automated method for improved flood defense representation in large-scale hydraulic models. Water Resources Research, 55, 11007-11034.

Examining the quality of depth-damage functions

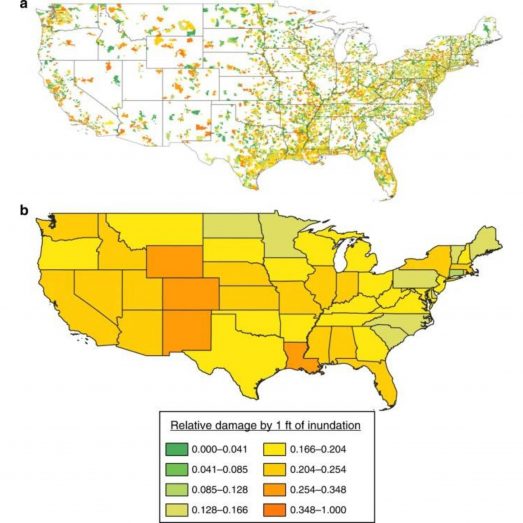

Our most recent work, published in March 2020 in Nature Communications, took a dive into the validity of the vulnerability curves the flood risk community use to translate hydrology (depths of water) into economics (flood damage). Ollie led this work with partners at UC Davis and the Wharton Risk Center at UPenn, finding commonly used depth-damage functions match poorly with observed losses recorded in the NFIP’s massive claims database. Not only do ubiquitous curves compiled by the US Army Corps of Engineers generally fail to represent the central tendency of damage-per-depth, the sheer breadth of the variability about the observed central tendency renders the very concept of a 1:1 depth-damage relationship broadly invalid. Instead, we find the true stochastic nature of depth-damage can be represented by a bimodal beta distribution. Damages are generally concentrated at <10% and >90% of a building’s value, and the probability of falling in the >90% damage category increases with increasing depth. These findings have broad ramifications for infrastructure investment in the US and, closer to home, the operation of flood catastrophe models.

Wing, O., Pinter, N., Bates, P., Kousky, C. (2020), New insights into US flood vulnerability revealed from flood insurance big data. Nature Communications, 11, 1444.