Just under two weeks ago we attended an expert meeting at the UN to participate in discussions on a new Global Risk Assessment Framework.

In total, over 150 experts attended the meeting from a wide array of backgrounds: scientists to policy makers, experts in flood hazard to experts in biological hazards. Given the range of expertise at the meeting, the scope of the discussions was broad, but despite such diversity, a number of common themes emerged over the course of the two-day meeting.

Standardisation

“There is a need for standardisation and interoperability of data and models”

R. Martinez, Director International Research Center on El Niño.

One of the common themes to emerge was a call for more standardised, consistent, and interoperable data and tools. Having widely different formats across different tools can be a real headache, particularly for non-experts or for organisations with limited resources. Standardised data/tools will remove many of the barriers that currently preclude decision makers from accessing the latest technologies.

In the insurance world, standardisation of data formats in risk modelling is common practice, enabling insurance companies of all sizes to access catastrophe models across all perils, from windstorm to wildfire. In recent years the emergence of modelling frameworks such as OASIS-LMF has taken this standardisation one step further, allowing expert hazard modellers from outside the industry to integrate their models into a common format that can be easily used by insurers. This aids the hazard expert/scientist by granting them access to a market that would otherwise be inaccessible. It also aids the insurers by allowing them access to cutting edge hazard models.

It seems that in terms of data standardisation and interoperability, there is much to be learned from the work currently being undertaken within the insurance sector.

Validation

“We wish insurers would/could make claims data available to scientists”

Dr P Towashiraporn, ADPC.

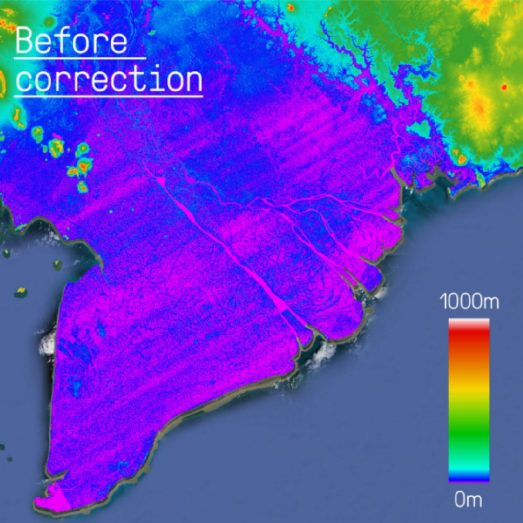

Since the conclusion of the previous Global Assessment Report, in 2015, there have been significant advances in risk modelling across many disciplines. This is perhaps most evident in our field, flood modelling, with capabilities changing rapidly over the past few years.

However, one point to emerge over the course of this two-day meeting was that “We need to enable the validation and robustness of models” At Fathom, this is something that we advocate strongly. The only way to improve

models is to identify areas in which they perform poorly and areas in which they breakdown entirely. This is why we put significant effort into validating our models and, critically, subjecting them to scrutiny through the academic peer review process (Wing et al. 2017). In addition to improving model performance, identifying where models perform poorly is critical in enabling these models to be used appropriately by decision makers.

Unfortunately model validation is often difficult to undertake, particularly when attempting to validate large-scale loss models. This is where the insurance sector may have an important role to play. Claims data, gathered by insurance companies following major events, represents an invaluable source of validation data. However, these data are typically inaccessible, particularly to scientists, with strict legislation resulting in these data being locked away within individual insurers. Moving forward, the availability of these data to modellers would undoubtedly result in more robust and accurate loss models being built.

Exposure Data

As previously stated, there have been significant improvements in hazard modelling in recent years, with ever more sophisticated models being produced to replicate natural hazards. However, hazard models form only one part of a risk/loss model. Equally important are exposure and vulnerability data.

Put simply, if you want to produce a model that can robustly estimate risk, you not only need to know about the magnitude and location of hazards, but also the location of assets/people and the potential impact of the modelled hazard on them. As one representative at the UN meeting remarked,

“Datasets on Hazard are becoming advanced; Exposure datasets are being left behind”

The spatial complexity of flood in particular demands that accurate exposure data is used. Although it is true that global exposure data have been somewhat lagging, there are significant and exciting efforts being made to change this.

The Connectivity Lab at Facebook have been producing national scale population datasets that map population density with unprecedented accuracy. These data, based on hyper-resolution satellite imagery and machine learning techniques, are changing the way exposure datasets are created. At the AGU Fall Meeting next week, our team will be presenting work undertaken using these data, mapping populations exposed to flooding across numerous developing countries.

Although there are significant steps being made to improve global exposure datasets, adequate vulnerability data/models remains an area of considerable uncertainty. As Robert Muir-Wood of Risk Management Solutions put it, “

Within flood models, the relationship between hazard magnitude and damage/loss is notoriously uncertain and difficult to quantify. If any ground is to be made in tackling this problem, then it seems that more data, in particular detailed loss data, is going to be critical.

Summary

“There is a growing demand for comprehensive risk analysis… Member States have demanded a more comprehensive examination of global risk assessment to enable more robust estimation of potential risk.”

Mr. Robert Glasser, Special Representative of the United Nations Secretary-General for Disaster Risk Reduction.

To enable a more comprehensive examination of global risk, some areas mentioned above were identified as requiring specific attention. But more broadly speaking, I think other key messages emerged.

Firstly, that we as a community need access to more data. Aside from the development of new data and tools, a rich database of risk information already exists that is currently unavailable. I am referring primarily to loss and claims data. These data are critical if we are going to better understand exposure and vulnerability in the future.

Secondly, there is much to be learned from the insurance world. This industry has been using risk-modelling frameworks for over 20 years and is someway ahead in terms of their ability to use risk models to make decisions. Moreover, the requirement for standardised, consistent and interoperable data is something that the insurance industry has been aware of and working towards for some time.

Finally, the reason that the insurance industry has been able to make these advances is simply owing to capital. The amount of assets potentially exposed to natural hazards in the developed world necessitates that sufficient risk analytics be made available to allow insurers to make informed decisions. Consequently, private sector risk modelling is now a $500 million per annum industry. If we are to replicate these capabilities across developing regions then the capital requirement for detailed risk models must be replicated. Insurance penetration across many parts of the world is limited. Therefore there is limited demand from the private sector for risk modelling tools. It seems that more public sector investment is going to be crucial if we are to provide decision makers, including UN Member States, with the tools that they require.